News (Proprietary)

OpenAI Debuts GPT-5.1-Codex-Max, a Long-Horizon Agentic Coding Model With Compaction for Multi-Window Workflows

1+ week, 3+ day ago (451+ words) OpenAI has introduced GPT-5.1-Codex-Max, a frontier agentic coding model designed for long running software engineering tasks that span millions of tokens and multi hour sessions. It is available today inside Codex in the CLI, IDE extension, cloud integration and code review surfaces, with API access planned soon. GPT-5.1-Codex-Max is built on an update to OpenAI's foundational reasoning model. This base model is trained on agentic tasks across software engineering, math, research and other domains. On top of this, GPT-5.1-Codex-Max is trained on real world software engineering workloads such as PR creation, code review, frontend coding and Q&A. The model targets frontier coding evaluations rather than general chat. GPT-5.1-Codex-Max and the broader Codex family is recommended only for agentic coding tasks in Codex or Codex like environments, not as a drop in replacement for GPT-5.1 in general…...

1+ week, 3+ day ago (451+ words) OpenAI has introduced GPT-5.1-Codex-Max, a frontier agentic coding model designed for long running software engineering tasks that span millions of tokens and multi hour sessions. It is available today inside Codex in the CLI, IDE extension, cloud integration and code review surfaces, with API access planned soon. GPT-5.1-Codex-Max is built on an update to OpenAI's foundational reasoning model. This base model is trained on agentic tasks across software engineering, math, research and other domains. On top of this, GPT-5.1-Codex-Max is trained on real world software engineering workloads such as PR creation, code review, frontend coding and Q&A. The model targets frontier coding evaluations rather than general chat. GPT-5.1-Codex-Max and the broader Codex family is recommended only for agentic coding tasks in Codex or Codex like environments, not as a drop in replacement for GPT-5.1 in general…...

Focal Loss vs Binary Cross-Entropy: A Practical Guide for Imbalanced Classification

1+ week, 5+ day ago (442+ words) Binary cross-entropy (BCE) is the default loss function for binary classification'but it breaks down badly on imbalanced datasets. The reason is subtle but important: BCE weighs mistakes from both classes equally, even when one class is extremely rare." Imagine two predictions: a minority-class sample with true label 1 predicted at 0.3, and a majority-class sample with true label 0 predicted at 0.7. Both produce the same BCE value: "log(0.3). But should these two errors be treated equally? In an imbalanced dataset, definitely not'the mistake on the minority sample is far more costly." This is exactly where Focal Loss comes in. It reduces the contribution of easy, confident predictions and amplifies the impact of difficult, minority-class examples. As a result, the model focuses less on the overwhelmingly easy majority class and more on the patterns that actually matter. Check out the"FULL CODES here. In…...

1+ week, 5+ day ago (442+ words) Binary cross-entropy (BCE) is the default loss function for binary classification'but it breaks down badly on imbalanced datasets. The reason is subtle but important: BCE weighs mistakes from both classes equally, even when one class is extremely rare." Imagine two predictions: a minority-class sample with true label 1 predicted at 0.3, and a majority-class sample with true label 0 predicted at 0.7. Both produce the same BCE value: "log(0.3). But should these two errors be treated equally? In an imbalanced dataset, definitely not'the mistake on the minority sample is far more costly." This is exactly where Focal Loss comes in. It reduces the contribution of easy, confident predictions and amplifies the impact of difficult, minority-class examples. As a result, the model focuses less on the overwhelmingly easy majority class and more on the patterns that actually matter. Check out the"FULL CODES here. In…...

A Coding Implementation to Build Neural Memory Agents with Differentiable Memory, Meta-Learning, and Experience Replay for Continual Adaptation in Dynamic Environments

2+ week, 6+ day ago (967+ words) In this tutorial, we explore how neural memory agents can learn continuously without forgetting past experiences. We design a memory-augmented neural network that integrates a Differentiable Neural Computer (DNC) with experience replay and meta-learning to adapt quickly to new tasks while retaining prior knowledge. By implementing this approach in PyTorch, we demonstrate how content-based memory [] The post A Coding Implementation to Build Neural Memory Agents with Differentiable Memory, Meta-Learning, and Experience Replay for Continual Adaptation in Dynamic Environments appeared first on MarkTechPost. In this tutorial, we explore how neural memory agents can learn continuously without forgetting past experiences. We design a memory-augmented neural network that integrates a Differentiable Neural Computer (DNC) with experience replay and meta-learning to adapt quickly to new tasks while retaining prior knowledge. By implementing this approach in PyTorch, we demonstrate how content-based memory addressing and prioritized…...

2+ week, 6+ day ago (967+ words) In this tutorial, we explore how neural memory agents can learn continuously without forgetting past experiences. We design a memory-augmented neural network that integrates a Differentiable Neural Computer (DNC) with experience replay and meta-learning to adapt quickly to new tasks while retaining prior knowledge. By implementing this approach in PyTorch, we demonstrate how content-based memory [] The post A Coding Implementation to Build Neural Memory Agents with Differentiable Memory, Meta-Learning, and Experience Replay for Continual Adaptation in Dynamic Environments appeared first on MarkTechPost. In this tutorial, we explore how neural memory agents can learn continuously without forgetting past experiences. We design a memory-augmented neural network that integrates a Differentiable Neural Computer (DNC) with experience replay and meta-learning to adapt quickly to new tasks while retaining prior knowledge. By implementing this approach in PyTorch, we demonstrate how content-based memory addressing and prioritized…...

How to Build a Neuro-Symbolic Hybrid Agent that Combines Logical Planning with Neural Perception for Robust Autonomous Decision-Making

5+ day, 18+ hour ago (580+ words) In this tutorial, we demonstrate how to combine the strengths of symbolic reasoning with neural learning to build a powerful hybrid agent. We focus on creating a neuro-symbolic architecture that uses classical planning for structure, rules, and goal-directed behavior, while neural networks handle perception and action refinement. As we walk through the code, we see [] The post How to Build a Neuro-Symbolic Hybrid Agent that Combines Logical Planning with Neural Perception for Robust Autonomous Decision-Making appeared first on MarkTechPost. In this tutorial, we demonstrate how to combine the strengths of symbolic reasoning with neural learning to build a powerful hybrid agent. We focus on creating a neuro-symbolic architecture that uses classical planning for structure, rules, and goal-directed behavior, while neural networks handle perception and action refinement. As we walk through the code, we see how both layers interact in real…...

5+ day, 18+ hour ago (580+ words) In this tutorial, we demonstrate how to combine the strengths of symbolic reasoning with neural learning to build a powerful hybrid agent. We focus on creating a neuro-symbolic architecture that uses classical planning for structure, rules, and goal-directed behavior, while neural networks handle perception and action refinement. As we walk through the code, we see [] The post How to Build a Neuro-Symbolic Hybrid Agent that Combines Logical Planning with Neural Perception for Robust Autonomous Decision-Making appeared first on MarkTechPost. In this tutorial, we demonstrate how to combine the strengths of symbolic reasoning with neural learning to build a powerful hybrid agent. We focus on creating a neuro-symbolic architecture that uses classical planning for structure, rules, and goal-directed behavior, while neural networks handle perception and action refinement. As we walk through the code, we see how both layers interact in real…...

How to Design an Advanced Multi-Agent Reasoning System with spaCy Featuring Planning, Reflection, Memory, and Knowledge Graphs

2+ week, 2+ day ago (616+ words) In this tutorial, we build an advanced Agentic AI system using spaCy, designed to allow multiple intelligent agents to reason, collaborate, reflect, and learn from experience. We work through the entire pipeline step by step, observing how each agent processes tasks using planning, memory, communication, and semantic reasoning. By the end, we see how the [] The post How to Design an Advanced Multi-Agent Reasoning System with spaCy Featuring Planning, Reflection, Memory, and Knowledge Graphs appeared first on MarkTechPost. In this tutorial, we build an advanced Agentic AI system using spaCy, designed to allow multiple intelligent agents to reason, collaborate, reflect, and learn from experience. We work through the entire pipeline step by step, observing how each agent processes tasks using planning, memory, communication, and semantic reasoning. By the end, we see how the system evolves into a dynamic multi-agent architecture…...

2+ week, 2+ day ago (616+ words) In this tutorial, we build an advanced Agentic AI system using spaCy, designed to allow multiple intelligent agents to reason, collaborate, reflect, and learn from experience. We work through the entire pipeline step by step, observing how each agent processes tasks using planning, memory, communication, and semantic reasoning. By the end, we see how the [] The post How to Design an Advanced Multi-Agent Reasoning System with spaCy Featuring Planning, Reflection, Memory, and Knowledge Graphs appeared first on MarkTechPost. In this tutorial, we build an advanced Agentic AI system using spaCy, designed to allow multiple intelligent agents to reason, collaborate, reflect, and learn from experience. We work through the entire pipeline step by step, observing how each agent processes tasks using planning, memory, communication, and semantic reasoning. By the end, we see how the system evolves into a dynamic multi-agent architecture…...

Meta AI Releases Omnilingual ASR: A Suite of Open-Source Multilingual Speech Recognition Models for 1600+ Languages

2+ week, 3+ day ago (482+ words) OpenAI has released GPT-5.1 as the next iteration in the GPT-5 family, with 2 core variants, GPT-5.1 Instant and GPT-5.1 Thinking. The update focuses on 3 axes, adaptive reasoning behavior, clearer explanations, and stronger control over tone and safety. Instruction following is another explicit target. In OpenAI's examples, GPT-5.1 Instant is more reliable on constraints such as "always respond with 6 words' and maintains that constraint across turns. This is relevant when you build tools that rely on strict formats or short natural language responses, for example structured outputs, message templates, or chained tools that expect bounded length. The combination of adaptive reasoning and stricter instruction adherence makes GPT-5.1 Instant a more predictable front end for many agent workflows where most calls are simple, but a tail of calls require deeper reasoning. GPT-5.1 Thinking takes the GPT-5 Thinking approach and tightens how thinking…...

2+ week, 3+ day ago (482+ words) OpenAI has released GPT-5.1 as the next iteration in the GPT-5 family, with 2 core variants, GPT-5.1 Instant and GPT-5.1 Thinking. The update focuses on 3 axes, adaptive reasoning behavior, clearer explanations, and stronger control over tone and safety. Instruction following is another explicit target. In OpenAI's examples, GPT-5.1 Instant is more reliable on constraints such as "always respond with 6 words' and maintains that constraint across turns. This is relevant when you build tools that rely on strict formats or short natural language responses, for example structured outputs, message templates, or chained tools that expect bounded length. The combination of adaptive reasoning and stricter instruction adherence makes GPT-5.1 Instant a more predictable front end for many agent workflows where most calls are simple, but a tail of calls require deeper reasoning. GPT-5.1 Thinking takes the GPT-5 Thinking approach and tightens how thinking…...

Anthropic Turns MCP Agents Into Code First Systems With 'Code Execution With MCP' Approach

3+ week, 1+ day ago (549+ words) MCP is an open standard that lets AI applications connect to external systems through MCP servers that expose tools. These tools let a model query databases, call APIs, or work with files through a unified interface. In the default pattern, an agent loads many tool definitions into the model context. Each tool definition contains schema information and metadata. Intermediate results from each tool call are also streamed back into the context so the model can decide the next call. When there are many MCP servers and many tools, this pattern does not scale. The model pays to read large tool catalogs and to move large payloads between tools. Latency increases, costs grow, and context limits become a hard cap on system behavior. Anthropic's proposal is to place MCP inside a code execution loop. Instead of letting the model call tools…...

3+ week, 1+ day ago (549+ words) MCP is an open standard that lets AI applications connect to external systems through MCP servers that expose tools. These tools let a model query databases, call APIs, or work with files through a unified interface. In the default pattern, an agent loads many tool definitions into the model context. Each tool definition contains schema information and metadata. Intermediate results from each tool call are also streamed back into the context so the model can decide the next call. When there are many MCP servers and many tools, this pattern does not scale. The model pays to read large tool catalogs and to move large payloads between tools. Latency increases, costs grow, and context limits become a hard cap on system behavior. Anthropic's proposal is to place MCP inside a code execution loop. Instead of letting the model call tools…...

Build an Autonomous Wet-Lab Protocol Planner and Validator Using Salesforce CodeGen for Agentic Experiment Design and Safety Optimization

3+ week, 2+ day ago (199+ words) We begin by importing essential libraries and loading the Salesforce CodeGen-350M-mono model locally for lightweight, API-free inference. We initialize both the tokenizer and model with float16 precision and automatic device mapping to ensure compatibility and speed on Colab GPUs. We define the ProtocolParser and InventoryManager classes to extract structured experimental details and verify reagent inventory. We parse each protocol step for duration, temperature, and safety markers, while the inventory manager validates stock levels, expiry dates, and reagent availability through fuzzy matching. We construct the agent loop, integrating perception, planning, validation, and revision into a single, coherent flow. We use CodeGen for reasoning-based optimization to refine step sequencing and propose practical improvements for efficiency and parallel execution. We create output generators that transform results into human-readable Markdown checklists and Gantt-compatible CSVs. We ensure that every execution produces clear summaries of reagents,…...

3+ week, 2+ day ago (199+ words) We begin by importing essential libraries and loading the Salesforce CodeGen-350M-mono model locally for lightweight, API-free inference. We initialize both the tokenizer and model with float16 precision and automatic device mapping to ensure compatibility and speed on Colab GPUs. We define the ProtocolParser and InventoryManager classes to extract structured experimental details and verify reagent inventory. We parse each protocol step for duration, temperature, and safety markers, while the inventory manager validates stock levels, expiry dates, and reagent availability through fuzzy matching. We construct the agent loop, integrating perception, planning, validation, and revision into a single, coherent flow. We use CodeGen for reasoning-based optimization to refine step sequencing and propose practical improvements for efficiency and parallel execution. We create output generators that transform results into human-readable Markdown checklists and Gantt-compatible CSVs. We ensure that every execution produces clear summaries of reagents,…...

A Coding Implementation to Build and Train Advanced Architectures with Residual Connections, Self-Attention, and Adaptive Optimization Using JAX, Flax, and Optax

2+ week, 5+ day ago (299+ words) We begin by installing and importing JAX, Flax, and Optax, along with essential utilities for numerical operations and visualization. We check our device setup to ensure that JAX is running efficiently on available hardware. This setup forms the foundation for the entire training pipeline. Check out the'FULL CODES here. We define a deep neural network that combines residual blocks and a self-attention mechanism for enhanced feature learning. We construct the layers modularly, ensuring that the model can capture both spatial and contextual relationships. This design enables the network to generalize effectively across various types of input data. Check out the'FULL CODES here. We create a custom training state that tracks model parameters and batch statistics. We also define a learning rate schedule with warmup and cosine decay, paired with an AdamW optimizer that includes gradient clipping and weight decay. This…...

2+ week, 5+ day ago (299+ words) We begin by installing and importing JAX, Flax, and Optax, along with essential utilities for numerical operations and visualization. We check our device setup to ensure that JAX is running efficiently on available hardware. This setup forms the foundation for the entire training pipeline. Check out the'FULL CODES here. We define a deep neural network that combines residual blocks and a self-attention mechanism for enhanced feature learning. We construct the layers modularly, ensuring that the model can capture both spatial and contextual relationships. This design enables the network to generalize effectively across various types of input data. Check out the'FULL CODES here. We create a custom training state that tracks model parameters and batch statistics. We also define a learning rate schedule with warmup and cosine decay, paired with an AdamW optimizer that includes gradient clipping and weight decay. This…...

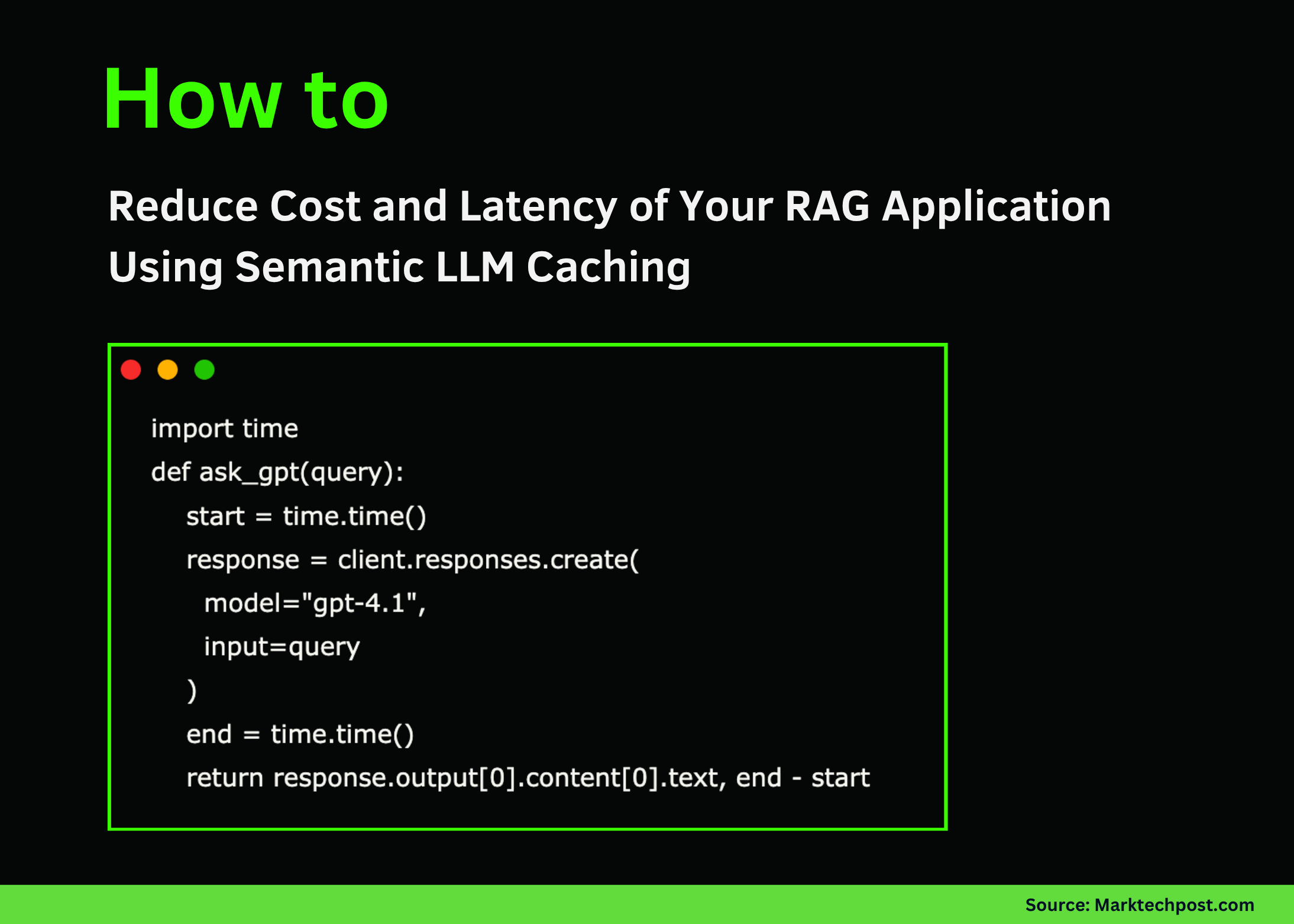

How to Reduce Cost and Latency of Your RAG Application Using Semantic LLM Caching

2+ week, 5+ day ago (425+ words) Semantic caching functions by storing and retrieving responses based on the meaning of user queries rather than their exact wording. Each incoming query is converted into a vector embedding that represents its semantic content. The system then performs a similarity search'often using Approximate Nearest Neighbor (ANN) techniques'to compare this embedding with those already stored in the cache." In a RAG application, semantic caching only stores responses for queries that have actually been processed by the system'there's no pre-caching of all possible questions. Each query that reaches the LLM and produces an answer can create a cache entry containing the query's embedding and corresponding response." Depending on the system's design, the cache may store just the final LLM outputs, the retrieved documents, or both. To maintain efficiency, cache entries are managed through policies like time-to-live (TTL) expiration or Least Recently Used…...

2+ week, 5+ day ago (425+ words) Semantic caching functions by storing and retrieving responses based on the meaning of user queries rather than their exact wording. Each incoming query is converted into a vector embedding that represents its semantic content. The system then performs a similarity search'often using Approximate Nearest Neighbor (ANN) techniques'to compare this embedding with those already stored in the cache." In a RAG application, semantic caching only stores responses for queries that have actually been processed by the system'there's no pre-caching of all possible questions. Each query that reaches the LLM and produces an answer can create a cache entry containing the query's embedding and corresponding response." Depending on the system's design, the cache may store just the final LLM outputs, the retrieved documents, or both. To maintain efficiency, cache entries are managed through policies like time-to-live (TTL) expiration or Least Recently Used…...