News (Proprietary)

Meta AI Researchers Introduce Matrix: A Ray Native a Decentralized Framework for Multi Agent Synthetic Data Generation

12+ hour, 44+ min ago (444+ words) Traditional agent frameworks keep workflow state and control logic inside a central orchestrator. Every agent call, tool call and retry goes through that controller. This model is easy to reason about, but it does not scale well when you need tens of thousands of concurrent synthetic dialogues or tool trajectories. This design reduces idle time when different trajectories have very different lengths. It also makes fault handling local to a task. If one orchestrator fails it does not stall a batch. Matrix runs on a Ray cluster that is usually launched on SLURM. Ray provides distributed actors and queues. Ray Serve exposes LLM endpoints behind vLLM and SGLang, and can also route to external APIs such as Azure OpenAI or Gemini through proxy servers. Tool calls and other complex services run inside Apptainer containers. This isolates the agent runtime from…...

12+ hour, 44+ min ago (444+ words) Traditional agent frameworks keep workflow state and control logic inside a central orchestrator. Every agent call, tool call and retry goes through that controller. This model is easy to reason about, but it does not scale well when you need tens of thousands of concurrent synthetic dialogues or tool trajectories. This design reduces idle time when different trajectories have very different lengths. It also makes fault handling local to a task. If one orchestrator fails it does not stall a batch. Matrix runs on a Ray cluster that is usually launched on SLURM. Ray provides distributed actors and queues. Ray Serve exposes LLM endpoints behind vLLM and SGLang, and can also route to external APIs such as Azure OpenAI or Gemini through proxy servers. Tool calls and other complex services run inside Apptainer containers. This isolates the agent runtime from…...

StepFun AI Releases Step-Audio-R1: A New Audio LLM that Finally Benefits from Test Time Compute Scaling

1+ day, 29+ min ago (359+ words) Most current audio models inherit their reasoning behavior from text training. They learn to reason as if they read transcripts, not as if they listen. The StepFun team calls this Textual Surrogate Reasoning. The model uses imagined words and descriptions instead of acoustic cues such as pitch contour, rhythm, timbre or background noise patterns. The architecture stays close to the previous Step Audio systems: The decoder always produces an explicit reasoning block inside and tags, followed by the final answer. This separation lets training objectives shape the structure and content of reasoning without losing focus on task accuracy. The model is released as a 33B parameter audio text to text model on Hugging Face under Apache 2.0. The pipeline has a supervised cold start stage and a reinforcement learning stage that both mix text and audio tasks. Supervised learning trains Step-Audio-R1 to…...

1+ day, 29+ min ago (359+ words) Most current audio models inherit their reasoning behavior from text training. They learn to reason as if they read transcripts, not as if they listen. The StepFun team calls this Textual Surrogate Reasoning. The model uses imagined words and descriptions instead of acoustic cues such as pitch contour, rhythm, timbre or background noise patterns. The architecture stays close to the previous Step Audio systems: The decoder always produces an explicit reasoning block inside and tags, followed by the final answer. This separation lets training objectives shape the structure and content of reasoning without losing focus on task accuracy. The model is released as a 33B parameter audio text to text model on Hugging Face under Apache 2.0. The pipeline has a supervised cold start stage and a reinforcement learning stage that both mix text and audio tasks. Supervised learning trains Step-Audio-R1 to…...

NVIDIA AI Releases Orchestrator-8B: A Reinforcement Learning Trained Controller for Efficient Tool and Model Selection

1+ day, 18+ hour ago (408+ words) How can an AI system learn to pick the right model or tool for each step of a task instead of always relying on one large model for everything? NVIDIA researchers release ToolOrchestra, a novel method for training a small language model to act as the orchestrator- the "brain" of a heterogeneous tool-use agent ToolOrchestra instead trains a small orchestrator explicitly for this routing problem, using reinforcement learning over full multi turn trajectories. Orchestrator-8B is an 8B parameter decoder only Transformer. It is built by fine tuning Qwen3-8B as an orchestration model and released on Hugging Face. ToolOrchestra formulates the whole workflow as a Markov Decision Process. The state contains the conversation history, past tool calls and observations, and user preferences. Actions are the next text step, including both reasoning tokens and a tool call schema. After up to 50 steps, the environment…...

1+ day, 18+ hour ago (408+ words) How can an AI system learn to pick the right model or tool for each step of a task instead of always relying on one large model for everything? NVIDIA researchers release ToolOrchestra, a novel method for training a small language model to act as the orchestrator- the "brain" of a heterogeneous tool-use agent ToolOrchestra instead trains a small orchestrator explicitly for this routing problem, using reinforcement learning over full multi turn trajectories. Orchestrator-8B is an 8B parameter decoder only Transformer. It is built by fine tuning Qwen3-8B as an orchestration model and released on Hugging Face. ToolOrchestra formulates the whole workflow as a Markov Decision Process. The state contains the conversation history, past tool calls and observations, and user preferences. Actions are the next text step, including both reasoning tokens and a tool call schema. After up to 50 steps, the environment…...

Tencent Hunyuan Releases HunyuanOCR: a 1B Parameter End to End OCR Expert VLM

4+ day, 3+ hour ago (486+ words) Tencent Hunyuan has released HunyuanOCR, a 1B parameter vision language model that is specialized for OCR and document understanding. The model is built on Hunyuan's native multimodal architecture and runs spotting, parsing, information extraction, visual question answering, and text image translation through a single end to end pipeline. HunyuanOCR is a lightweight alternative to general VLMs such as Gemini 2.5 and Qwen3 VL that still matches or surpasses them on OCR centric tasks. It targets production use cases like document parsing, card and receipt extraction, video subtitle extraction, and multilingual document translation. The Adaptive MLP Connector performs learnable pooling on the spatial dimension. It compresses the dense visual tokens into a shorter sequence, while keeping information from text dense regions. This reduces sequence length passed to the language model and lowers compute, while preserving OCR relevant details. The language model is based on…...

4+ day, 3+ hour ago (486+ words) Tencent Hunyuan has released HunyuanOCR, a 1B parameter vision language model that is specialized for OCR and document understanding. The model is built on Hunyuan's native multimodal architecture and runs spotting, parsing, information extraction, visual question answering, and text image translation through a single end to end pipeline. HunyuanOCR is a lightweight alternative to general VLMs such as Gemini 2.5 and Qwen3 VL that still matches or surpasses them on OCR centric tasks. It targets production use cases like document parsing, card and receipt extraction, video subtitle extraction, and multilingual document translation. The Adaptive MLP Connector performs learnable pooling on the spatial dimension. It compresses the dense visual tokens into a shorter sequence, while keeping information from text dense regions. This reduces sequence length passed to the language model and lowers compute, while preserving OCR relevant details. The language model is based on…...

Salesforce AI Research Introduces xRouter: A Reinforcement Learning Router for Cost Aware LLM Orchestration

5+ day, 4+ hour ago (392+ words) When your application can call many different LLMs with very different prices and capabilities, who should decide which one answers each request? Salesforce AI research team introduces "xRouter, a tool-calling'based routing system that targets this gap with a reinforcement learning based router and learns when to answer locally and when to call external models, while tracking cost at token level. Routing is framed as a reinforcement learning problem. For each episode, the reward combines a binary success signal and a cost penalty. The research team defines a reward that gives a fixed bonus when the final answer is correct, then subtracts a term proportional to the total normalized cost of all model calls. If the answer is wrong, the reward is zero regardless of how cheap it was. Failed trajectories, such as wrong answers from expensive models or unnecessary calls…...

5+ day, 4+ hour ago (392+ words) When your application can call many different LLMs with very different prices and capabilities, who should decide which one answers each request? Salesforce AI research team introduces "xRouter, a tool-calling'based routing system that targets this gap with a reinforcement learning based router and learns when to answer locally and when to call external models, while tracking cost at token level. Routing is framed as a reinforcement learning problem. For each episode, the reward combines a binary success signal and a cost penalty. The research team defines a reward that gives a fixed bonus when the final answer is correct, then subtracts a term proportional to the total normalized cost of all model calls. If the answer is wrong, the reward is zero regardless of how cheap it was. Failed trajectories, such as wrong answers from expensive models or unnecessary calls…...

Agent0: A Fully Autonomous AI Framework that Evolves High-Performing Agents without External Data through Multi-Step Co-Evolution

5+ day, 16+ hour ago (661+ words) Large language models need huge human datasets, so what happens if the model must create all its own curriculum and teach itself to use tools? A team of researchers from UNC-Chapel Hill, Salesforce Research and Stanford University introduce "Agent0, a fully autonomous framework that evolves high-performing agents without external data through multi-step co-evolution and seamless tool integration Agent0 targets mathematical and general reasoning. It shows that careful task generation and tool integrated rollouts can push a base model beyond its original capabilities, across ten benchmarks. Agent0 starts from a base policy "_base, for example Qwen3 4B Base or Qwen3 8B Base. It clones this policy into: Training proceeds in iterations with two stages per iteration: This loop creates a feedback cycle. As the executor becomes stronger by using the code interpreter, the curriculum must generate more complex, tool reliant problems to keep its reward high. The curriculum reward…...

5+ day, 16+ hour ago (661+ words) Large language models need huge human datasets, so what happens if the model must create all its own curriculum and teach itself to use tools? A team of researchers from UNC-Chapel Hill, Salesforce Research and Stanford University introduce "Agent0, a fully autonomous framework that evolves high-performing agents without external data through multi-step co-evolution and seamless tool integration Agent0 targets mathematical and general reasoning. It shows that careful task generation and tool integrated rollouts can push a base model beyond its original capabilities, across ten benchmarks. Agent0 starts from a base policy "_base, for example Qwen3 4B Base or Qwen3 8B Base. It clones this policy into: Training proceeds in iterations with two stages per iteration: This loop creates a feedback cycle. As the executor becomes stronger by using the code interpreter, the curriculum must generate more complex, tool reliant problems to keep its reward high. The curriculum reward…...

How to Build a Neuro-Symbolic Hybrid Agent that Combines Logical Planning with Neural Perception for Robust Autonomous Decision-Making

5+ day, 17+ hour ago (580+ words) In this tutorial, we demonstrate how to combine the strengths of symbolic reasoning with neural learning to build a powerful hybrid agent. We focus on creating a neuro-symbolic architecture that uses classical planning for structure, rules, and goal-directed behavior, while neural networks handle perception and action refinement. As we walk through the code, we see [] The post How to Build a Neuro-Symbolic Hybrid Agent that Combines Logical Planning with Neural Perception for Robust Autonomous Decision-Making appeared first on MarkTechPost. In this tutorial, we demonstrate how to combine the strengths of symbolic reasoning with neural learning to build a powerful hybrid agent. We focus on creating a neuro-symbolic architecture that uses classical planning for structure, rules, and goal-directed behavior, while neural networks handle perception and action refinement. As we walk through the code, we see how both layers interact in real…...

5+ day, 17+ hour ago (580+ words) In this tutorial, we demonstrate how to combine the strengths of symbolic reasoning with neural learning to build a powerful hybrid agent. We focus on creating a neuro-symbolic architecture that uses classical planning for structure, rules, and goal-directed behavior, while neural networks handle perception and action refinement. As we walk through the code, we see [] The post How to Build a Neuro-Symbolic Hybrid Agent that Combines Logical Planning with Neural Perception for Robust Autonomous Decision-Making appeared first on MarkTechPost. In this tutorial, we demonstrate how to combine the strengths of symbolic reasoning with neural learning to build a powerful hybrid agent. We focus on creating a neuro-symbolic architecture that uses classical planning for structure, rules, and goal-directed behavior, while neural networks handle perception and action refinement. As we walk through the code, we see how both layers interact in real…...

Moonshot AI Researchers Introduce Seer: An Online Context Learning System for Fast Synchronous Reinforcement Learning RL Rollouts

1+ week, 16+ hour ago (524+ words) Modern reasoning RL workloads use long chain of thought style outputs. In the Seer experiments, the researchers apply GRPO to three different models, Moonlight, Qwen2 VL 72B and Kimi K2. These workloads run on 32 compute nodes with 8 H800 GPUs per node. The three tasks use 32, 128 and 256 GPUs respectively, with 400, 600 and 800 prompts per iteration and 8 or 16 responses per prompt. Maximum generation length is large. Moonlight is configured for 65,536 tokens, Qwen2 VL 72B for 40,960 tokens and Kimi K2 for 98,304 tokens. A single long chain of thought request can grow from a few hundred megabytes of KVCache to tens of gigabytes as decoding progresses. This memory growth forces instances to reduce concurrency or to preempt requests, which triggers expensive re decoding. The research team defines tail requests as the last 10 percent of requests to finish in a rollout. For Moonlight and Qwen2 VL 72B, this tail alone can consume up to 50 percent…...

1+ week, 16+ hour ago (524+ words) Modern reasoning RL workloads use long chain of thought style outputs. In the Seer experiments, the researchers apply GRPO to three different models, Moonlight, Qwen2 VL 72B and Kimi K2. These workloads run on 32 compute nodes with 8 H800 GPUs per node. The three tasks use 32, 128 and 256 GPUs respectively, with 400, 600 and 800 prompts per iteration and 8 or 16 responses per prompt. Maximum generation length is large. Moonlight is configured for 65,536 tokens, Qwen2 VL 72B for 40,960 tokens and Kimi K2 for 98,304 tokens. A single long chain of thought request can grow from a few hundred megabytes of KVCache to tens of gigabytes as decoding progresses. This memory growth forces instances to reduce concurrency or to preempt requests, which triggers expensive re decoding. The research team defines tail requests as the last 10 percent of requests to finish in a rollout. For Moonlight and Qwen2 VL 72B, this tail alone can consume up to 50 percent…...

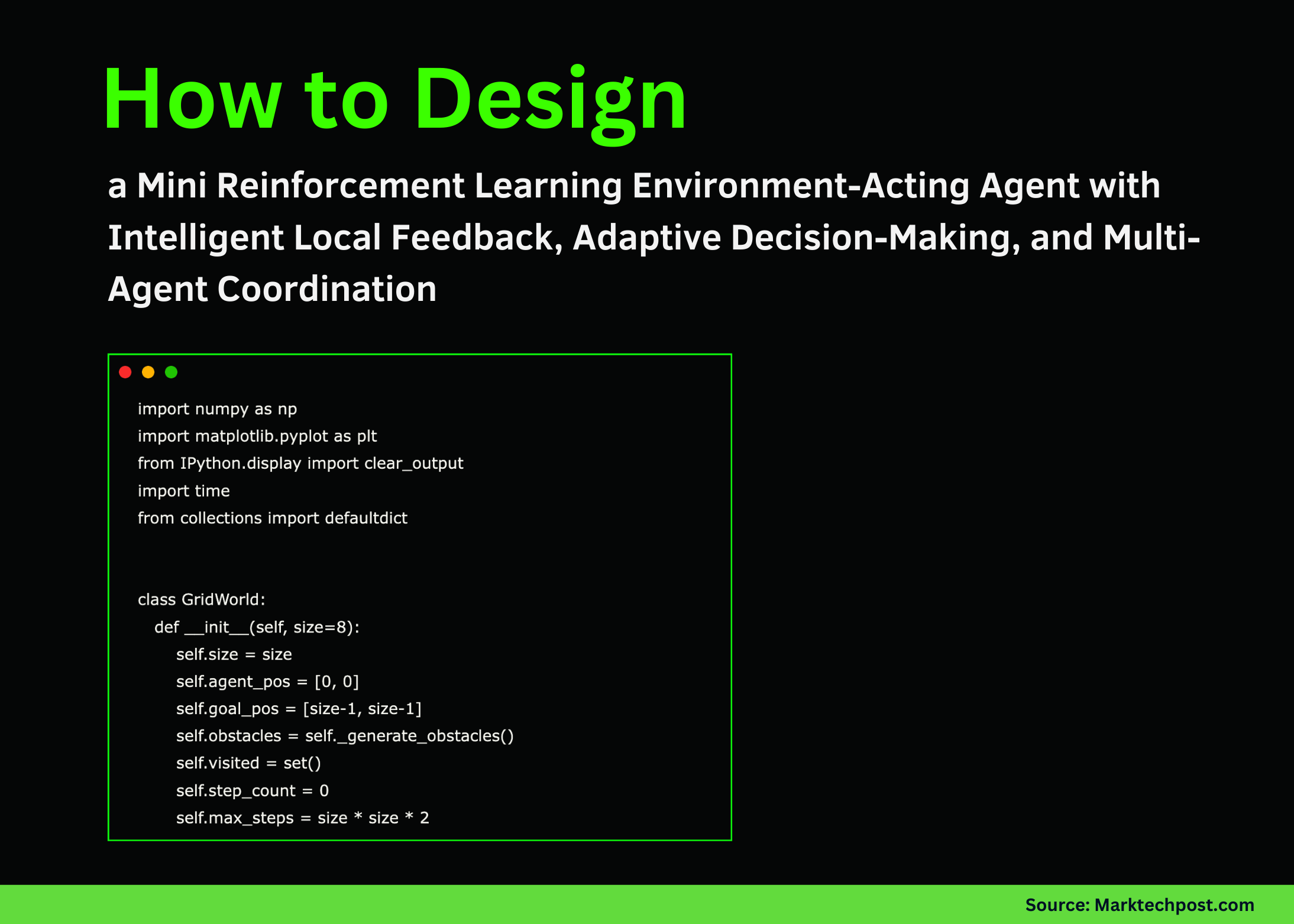

How to Design a Mini Reinforcement Learning Environment-Acting Agent with Intelligent Local Feedback, Adaptive Decision-Making, and Multi-Agent Coordination

1+ week, 17+ hour ago (275+ words) We set up the entire GridWorld environment and define how the agent, goal, and obstacles exist in it. We establish the structure for state representation and valid movements, and we prepare the environment so we can interact with it dynamically. As we run this part, we see the world taking shape and becoming ready for the agents to explore. Check out the'FULL CODES here. We define how each step in the environment works and how the world is visually rendered. We calculate rewards, detect collisions, track progress, and display everything through a clean grid visualization. As we execute this logic, we watch the agent's journey unfold in real time with clear feedback. Check out the'FULL CODES here. We implement the Action Agent and Tool Agent, giving the system both learning capability and analytical feedback. We observe how the Action Agent…...

1+ week, 17+ hour ago (275+ words) We set up the entire GridWorld environment and define how the agent, goal, and obstacles exist in it. We establish the structure for state representation and valid movements, and we prepare the environment so we can interact with it dynamically. As we run this part, we see the world taking shape and becoming ready for the agents to explore. Check out the'FULL CODES here. We define how each step in the environment works and how the world is visually rendered. We calculate rewards, detect collisions, track progress, and display everything through a clean grid visualization. As we execute this logic, we watch the agent's journey unfold in real time with clear feedback. Check out the'FULL CODES here. We implement the Action Agent and Tool Agent, giving the system both learning capability and analytical feedback. We observe how the Action Agent…...

An Implementation of Fully Traced and Evaluated Local LLM Pipeline Using Opik for Transparent, Measurable, and Reproducible AI Workflows

1+ week, 2+ day ago (395+ words) We set up our environment by installing the required libraries and initializing Opik. We load the core modules, detect the device, and configure our project so that every trace flows into the correct workspace. We lay the foundation for the rest of the tutorial. Check out the'FULL CODES here. We load a lightweight Hugging Face model and create a small helper function to generate text cleanly. We prepare the LLM to operate locally without external APIs. This gives us a reliable and reproducible generation layer for the rest of the pipeline. Check out the'FULL CODES here. We define two structured prompts using Opik's Prompt class. We control the planning phase and answering phase through clear templates. This helps us maintain consistency and observe how structured prompting impacts model behavior. Check out the'FULL CODES here. We construct a tiny document store…...

1+ week, 2+ day ago (395+ words) We set up our environment by installing the required libraries and initializing Opik. We load the core modules, detect the device, and configure our project so that every trace flows into the correct workspace. We lay the foundation for the rest of the tutorial. Check out the'FULL CODES here. We load a lightweight Hugging Face model and create a small helper function to generate text cleanly. We prepare the LLM to operate locally without external APIs. This gives us a reliable and reproducible generation layer for the rest of the pipeline. Check out the'FULL CODES here. We define two structured prompts using Opik's Prompt class. We control the planning phase and answering phase through clear templates. This helps us maintain consistency and observe how structured prompting impacts model behavior. Check out the'FULL CODES here. We construct a tiny document store…...